What Is Computer Programming?

Introduction

Today, most people don't need to know how a computer works. Most people can simply turn on a computer or a mobile phone and point at some little graphical object on the display, click a button or swipe a finger or two, and the computer does something. An example would be to get weather information from the net and display it. How to interact with a computer program is all the average person needs to know.

proc-ess / Noun: A series of actions or steps taken to achieve an end. pro-ce-dure / Noun: A series of actions conducted in a certain order or manner.

But, since you are going to learn how to write computer programs, you need to know a little bit about how a computer works. Your job will be to instruct the computer to do things. Basically, writing software (computer programs) is describing how to do something. In its simplest form, it is a lot like writing down the steps it takes to do something - a process, a procedure. The lists of instructions that you will write are computer programs, and the stuff that these instructions manipulate are different types of objects, e.g., numbers, words, graphics, etc...

So, writing a computer program can be like composing music, like designing a house, like creating lots of stuff. It has been argued that in its current state it is an art, not engineering.

An important reason to consider learning about how to program a computer is that the concepts underlying this will be valuable to you, regardless of whether or not you go on to make a career out of it. One thing that you will learn quickly is that a computer is very dumb but obedient. It does exactly what you tell it to do, which is not necessarily what you wanted. Programming will help you learn the importance of clarity of expression.

A deep understanding of programming, in particular the notions of successive decomposition as a mode of analysis and debugging of trial solutions, results in significant educational benefits in many domains of discourse, including those unrelated to computers and information technology per se. (Seymour Papert, in "Mindstorms")

It has often been said that a person does not really understand something until he teaches it to someone else. Actually a person does not really understand something until after teaching it to a computer, i.e., express it as an algorithm." (Donald Knuth, in "American Mathematical Monthly," 81)

Computers have proven immensely effective as aids to clear thinking. Muddled and half-baked ideas have sometimes survived for centuries because luminaries have deluded themselves as much as their followers or because lesser lights, fearing ridicule, couldn't summon up the nerve to admit that they didn't know what the Master was talking about. A test as near foolproof as one could get of whether you understand something as well as you think is to express it as a computer program and then see if the program does what it is supposed to. Computers are not sycophants and won't make enthusiastic noises to ensure their promotion or camouflage what they don't know. What you get is what you said. (James P. Hogan in "Mind Matters")

But, most of all, it can be lots of fun! An associate once said to me "I can't believe I'm paid so well for something I love to do."

Just what do instructions a computer understands look like? And, what kinds of objects do the instructions manipulate? By the end of this lesson you will be able to answer these questions. But first let's try to write a program in the English language.

Programming Using the English Language

Remember what I said in the Introduction to this lesson?

Writing software, computer programs, is a lot like writing down the steps it takes to do something.

Before we see what a computer programming language looks like, let's use the English language to describe how to do something as a series of steps. A common exercise that really gets you thinking about what computer programming can be like is to describe a process you are familiar with.

Describe how to make a peanut butter and jelly sandwich.

Rather than write my own version of this exercise, I searched the Internet for the words "computer programming sandwich" using Google. One of the hits returned was http://teachers.net/lessons/posts/2166.html. At the link, Deb Sweeney (Tamaqua Area Middle School, Tamaqua, PA) described the problem as:

Objective: Students will write specific and sequential steps on how to make a peanut butter and jelly sandwich. Procedure: Students will write a very detailed and step-by-step paragraph on how to make a peanut butter and jelly sandwich for homework. The next day, the students will then input (read) their instructions to the computer (teacher). The teacher will then "make" the programs, being sure to do exactly what the students said...

When this exercise is directed by an experienced teacher or mentor it is excellent for demonstrating how careful you need to be, how detailed you need to be, when writing a computer program. Here is teacher/mentor support material.

Programming in a natural language, say the full scope of the English language, seems like a very difficult task. But, before moving on to languages we can write programs in today, I want to leave on a high note. Click here to read about how Stephen Wolfram sees programming in a natural language happening.

Programming Languages (High-Level Languages) Almost all of the computer programming these days is done with high-level programming languages. There are lots of them and some are quite old. COBOL, FORTRAN, and Lisp were devised in the 1950s!!! As you will see, high-level languages make it easier to describe the pieces of the program you are creating. They help by letting you concentrate on what you are trying to do rather than that and how you fit it into a computer architecture. They abstract away the specifics of the microprocessor in your computer. And, all come with large sets of common stuff you need to do, called libraries.

In this introduction to programming, you will work with two computer languages: Logo and Java. Logo comes from Bolt, Beranek & Newman (BBN) and Massachusetts Institute of Technology (MIT). Seymour Papert, a scientist at MIT's Artificial Intelligence Laboratory, championed this computer programming language in the 70s. More research of its use in educational settings exists than for any other programming language. In fact, the fairly new Scratch Programming Language (also from MIT) consists of a modern graphical environment on top of Logo-like functionality.

Java is a fairly recent programming language. It appeared in 1995 just as the Internet was starting to get lots of attention. Java was invented by James Gosling, working at Sun Microsystems. It's sort-of a medium-level language. One of the big advantages of learning Java is that there is a lot of software already written ( see: Java Class Library) which will help you write graphical programs that run on the Internet. You get to take advantage of software that thousands of programmers have already written. Java is used in a variety of applications, from mobile phones to massive Internet data manipulation. You get to work with window objects, Internet connection objects, database access objects and thousands of others. Java is the language used to write Android apps.

So, why do these lessons start with the Logo programming language? I like the Logo language to teach introductory programming with because it is very easy to learn. The faster you get to write interesting computer programs the more fun you will have. And... having fun is important!

But do not let Logo's simplicity fool you into thinking it is just a toy programming language. Logo is a derivative of the Lisp programming language, a very powerful language still used today to tackle some of the most advanced research being performed. Brian Harvey shows the power of Logo in his Computer Science Logo Style series of books. Volume 3: Beyond Programming covers six college-level computer science topics with Logo.

Both Logo and Java have the same sort of stuff needed to write computer programs. . They have the ability to manipulate objects including lots of arithmetic functions, you can compare objects and do different things depending on the outcome of the comparison, and they provide ways to control the order in which instructions get performed. And... that's what programming is really all about... as you will see.

Just to give you a feel for what programming is like in a high-level language, here's a program that greets us, pretending to know English.

print [Hello world!]

This is one of the simplest programs that can be written in most high-level languages. PRINT is a command in Logo When it is performed, it takes whatever follows it and displays it. The "Hello world" program is famous; checkout its description on Wikipedia by clicking here.

In addition to commands, Logo has operators that output some sort of result. Although it's a bit contrived, here is a program that displays the product of a constant number (ten) and a random number in the range of zero through fourteen.

print product 10 (random 15)

In this code, the PRINT command's input is the output of the PRODUCT operator. PRODUCT multiplies whatever follows it by whatever follows that and outputs the result. So, PRODUCT needs two inputs. RANDOM is an operator that outputs a number that is greater than or equal to zero (0) and less than the number following it. So, PRODUCT gets its second input from the output of RANDOM. Confusing? Don't worry, we will get into the details of operators in lesson 8.

Finally, here's an advanced snipet of a program written in Logo, just to give you a feeling for what it looks like. Here is a procedure definition for selecting the maximum number from a list of numbers.

to getMax :maxNum :numList if empty? :numList [output :maxNum] if greater? (first :numList) :maxNum [output getMax (first :numList) (butfirst :numList)] output getMax :maxNum (butfirst :numList) end

Do not worry if this seems confusing. It will be a while before you will be writing anything like this. But, I want you to see that the words that make up the program's instructions and the instructions themselves are similar to English sentences, e.g., the first line and a half in the procedure are similar to the sentences:

If the list of numbers to process is empty then output the maximum number processed. If the first number in the list is greater than the maximum number processed so far then ...

So, a high-level programming language is *sort-of* like English, just one step closer to what the language a computer really understands looks like. Now let's move on to what a computer's native language looks like when it is given a symbolic representation.

Programming Languages (Assembler Language)

One step above a computer's native language is assembler language. In an assembler language, everything is given human-friendly symbolic names. The programmer works with operations that the microprocessor knows how to do, they have symbolic names. The microprocessor's registers and addresses in the computer's memory can also be given meaningful names by the programmer. This is actually a very big step over what a computer understands, but still tedious for writing a large program. Assembler language instructions still have a place for little snipits of software that need to interact directly with the microprocessor and/or those that are executed many, many, many times.

Table 1.1 is an example of DEC PDP-10 assembler language, a function that returns the largest integer in a group of them, named NUMARY. The group contains NCOUNT elements.

All rights reserved to http://www.bfoit.org/itp/Programming.html after the test above an image comes, we didn't download the image so visit the link above for more details...So keep reading

I'm showing you this so that you will have a feel for how primitive computer instruction sets are. I'm not going to go into the details of every instruction. If you want to go through it in detail on your own, the PDP-10 Machine Language is detailed here.

A few points I want to expose you to are the general kinds of things being done.

But there is a problem with assembler language - it is unique for every computer architecture. Although most deskside and notebook computers these days use the Intel architecture, this is only recently the case. And... a variety of computer architectures are commonly used in game systems, smart phones, tablets, automobiles, appliances, etc...

Ok, we are almost at a point where I can show you machine language, the *native* language of a computer. But for you to understand it, I'm going to have to explain how everything is represented in a computer.

Inside Computers - Bits and Pieces

Your computer successfully creates the illusion that it contains photographs, letters, songs, and movies. All it really contains is bits, lots of them, patterned in ways you can't see. Your computer was designed to store just bits - all the files and folders and different kinds of data are illusions created by computer programmers. (Hal Abelson, Ken Ledeen, Harry Lewis, in "Blown to Bits")

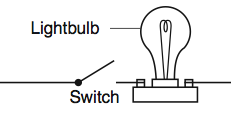

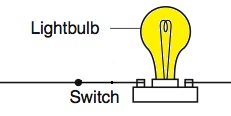

Basically, computer instructions perform operations on groups of bits. A bit is either on or off, like a lightbulb. Figure 1.1_a shows an open switch and a lightbulb that is off - just like a transistor in a computer represents a bit with the value: zero. Figure 1.1_b shows the switch in the closed position and the lightbulb is on, again just like a transistor in a computer representing a bit with the value: one.

Introduction

Today, most people don't need to know how a computer works. Most people can simply turn on a computer or a mobile phone and point at some little graphical object on the display, click a button or swipe a finger or two, and the computer does something. An example would be to get weather information from the net and display it. How to interact with a computer program is all the average person needs to know.

proc-ess / Noun: A series of actions or steps taken to achieve an end. pro-ce-dure / Noun: A series of actions conducted in a certain order or manner.

But, since you are going to learn how to write computer programs, you need to know a little bit about how a computer works. Your job will be to instruct the computer to do things. Basically, writing software (computer programs) is describing how to do something. In its simplest form, it is a lot like writing down the steps it takes to do something - a process, a procedure. The lists of instructions that you will write are computer programs, and the stuff that these instructions manipulate are different types of objects, e.g., numbers, words, graphics, etc...

So, writing a computer program can be like composing music, like designing a house, like creating lots of stuff. It has been argued that in its current state it is an art, not engineering.

An important reason to consider learning about how to program a computer is that the concepts underlying this will be valuable to you, regardless of whether or not you go on to make a career out of it. One thing that you will learn quickly is that a computer is very dumb but obedient. It does exactly what you tell it to do, which is not necessarily what you wanted. Programming will help you learn the importance of clarity of expression.

A deep understanding of programming, in particular the notions of successive decomposition as a mode of analysis and debugging of trial solutions, results in significant educational benefits in many domains of discourse, including those unrelated to computers and information technology per se. (Seymour Papert, in "Mindstorms")

It has often been said that a person does not really understand something until he teaches it to someone else. Actually a person does not really understand something until after teaching it to a computer, i.e., express it as an algorithm." (Donald Knuth, in "American Mathematical Monthly," 81)

Computers have proven immensely effective as aids to clear thinking. Muddled and half-baked ideas have sometimes survived for centuries because luminaries have deluded themselves as much as their followers or because lesser lights, fearing ridicule, couldn't summon up the nerve to admit that they didn't know what the Master was talking about. A test as near foolproof as one could get of whether you understand something as well as you think is to express it as a computer program and then see if the program does what it is supposed to. Computers are not sycophants and won't make enthusiastic noises to ensure their promotion or camouflage what they don't know. What you get is what you said. (James P. Hogan in "Mind Matters")

But, most of all, it can be lots of fun! An associate once said to me "I can't believe I'm paid so well for something I love to do."

Just what do instructions a computer understands look like? And, what kinds of objects do the instructions manipulate? By the end of this lesson you will be able to answer these questions. But first let's try to write a program in the English language.

Programming Using the English Language

Remember what I said in the Introduction to this lesson?

Writing software, computer programs, is a lot like writing down the steps it takes to do something.

Before we see what a computer programming language looks like, let's use the English language to describe how to do something as a series of steps. A common exercise that really gets you thinking about what computer programming can be like is to describe a process you are familiar with.

Describe how to make a peanut butter and jelly sandwich.

Rather than write my own version of this exercise, I searched the Internet for the words "computer programming sandwich" using Google. One of the hits returned was http://teachers.net/lessons/posts/2166.html. At the link, Deb Sweeney (Tamaqua Area Middle School, Tamaqua, PA) described the problem as:

Objective: Students will write specific and sequential steps on how to make a peanut butter and jelly sandwich. Procedure: Students will write a very detailed and step-by-step paragraph on how to make a peanut butter and jelly sandwich for homework. The next day, the students will then input (read) their instructions to the computer (teacher). The teacher will then "make" the programs, being sure to do exactly what the students said...

When this exercise is directed by an experienced teacher or mentor it is excellent for demonstrating how careful you need to be, how detailed you need to be, when writing a computer program. Here is teacher/mentor support material.

Programming in a natural language, say the full scope of the English language, seems like a very difficult task. But, before moving on to languages we can write programs in today, I want to leave on a high note. Click here to read about how Stephen Wolfram sees programming in a natural language happening.

Programming Languages (High-Level Languages) Almost all of the computer programming these days is done with high-level programming languages. There are lots of them and some are quite old. COBOL, FORTRAN, and Lisp were devised in the 1950s!!! As you will see, high-level languages make it easier to describe the pieces of the program you are creating. They help by letting you concentrate on what you are trying to do rather than that and how you fit it into a computer architecture. They abstract away the specifics of the microprocessor in your computer. And, all come with large sets of common stuff you need to do, called libraries.

In this introduction to programming, you will work with two computer languages: Logo and Java. Logo comes from Bolt, Beranek & Newman (BBN) and Massachusetts Institute of Technology (MIT). Seymour Papert, a scientist at MIT's Artificial Intelligence Laboratory, championed this computer programming language in the 70s. More research of its use in educational settings exists than for any other programming language. In fact, the fairly new Scratch Programming Language (also from MIT) consists of a modern graphical environment on top of Logo-like functionality.

Java is a fairly recent programming language. It appeared in 1995 just as the Internet was starting to get lots of attention. Java was invented by James Gosling, working at Sun Microsystems. It's sort-of a medium-level language. One of the big advantages of learning Java is that there is a lot of software already written ( see: Java Class Library) which will help you write graphical programs that run on the Internet. You get to take advantage of software that thousands of programmers have already written. Java is used in a variety of applications, from mobile phones to massive Internet data manipulation. You get to work with window objects, Internet connection objects, database access objects and thousands of others. Java is the language used to write Android apps.

So, why do these lessons start with the Logo programming language? I like the Logo language to teach introductory programming with because it is very easy to learn. The faster you get to write interesting computer programs the more fun you will have. And... having fun is important!

But do not let Logo's simplicity fool you into thinking it is just a toy programming language. Logo is a derivative of the Lisp programming language, a very powerful language still used today to tackle some of the most advanced research being performed. Brian Harvey shows the power of Logo in his Computer Science Logo Style series of books. Volume 3: Beyond Programming covers six college-level computer science topics with Logo.

Both Logo and Java have the same sort of stuff needed to write computer programs. . They have the ability to manipulate objects including lots of arithmetic functions, you can compare objects and do different things depending on the outcome of the comparison, and they provide ways to control the order in which instructions get performed. And... that's what programming is really all about... as you will see.

Just to give you a feel for what programming is like in a high-level language, here's a program that greets us, pretending to know English.

print [Hello world!]

This is one of the simplest programs that can be written in most high-level languages. PRINT is a command in Logo When it is performed, it takes whatever follows it and displays it. The "Hello world" program is famous; checkout its description on Wikipedia by clicking here.

In addition to commands, Logo has operators that output some sort of result. Although it's a bit contrived, here is a program that displays the product of a constant number (ten) and a random number in the range of zero through fourteen.

print product 10 (random 15)

In this code, the PRINT command's input is the output of the PRODUCT operator. PRODUCT multiplies whatever follows it by whatever follows that and outputs the result. So, PRODUCT needs two inputs. RANDOM is an operator that outputs a number that is greater than or equal to zero (0) and less than the number following it. So, PRODUCT gets its second input from the output of RANDOM. Confusing? Don't worry, we will get into the details of operators in lesson 8.

Finally, here's an advanced snipet of a program written in Logo, just to give you a feeling for what it looks like. Here is a procedure definition for selecting the maximum number from a list of numbers.

to getMax :maxNum :numList if empty? :numList [output :maxNum] if greater? (first :numList) :maxNum [output getMax (first :numList) (butfirst :numList)] output getMax :maxNum (butfirst :numList) end

Do not worry if this seems confusing. It will be a while before you will be writing anything like this. But, I want you to see that the words that make up the program's instructions and the instructions themselves are similar to English sentences, e.g., the first line and a half in the procedure are similar to the sentences:

If the list of numbers to process is empty then output the maximum number processed. If the first number in the list is greater than the maximum number processed so far then ...

So, a high-level programming language is *sort-of* like English, just one step closer to what the language a computer really understands looks like. Now let's move on to what a computer's native language looks like when it is given a symbolic representation.

Programming Languages (Assembler Language)

One step above a computer's native language is assembler language. In an assembler language, everything is given human-friendly symbolic names. The programmer works with operations that the microprocessor knows how to do, they have symbolic names. The microprocessor's registers and addresses in the computer's memory can also be given meaningful names by the programmer. This is actually a very big step over what a computer understands, but still tedious for writing a large program. Assembler language instructions still have a place for little snipits of software that need to interact directly with the microprocessor and/or those that are executed many, many, many times.

Table 1.1 is an example of DEC PDP-10 assembler language, a function that returns the largest integer in a group of them, named NUMARY. The group contains NCOUNT elements.

All rights reserved to http://www.bfoit.org/itp/Programming.html after the test above an image comes, we didn't download the image so visit the link above for more details...So keep reading

I'm showing you this so that you will have a feel for how primitive computer instruction sets are. I'm not going to go into the details of every instruction. If you want to go through it in detail on your own, the PDP-10 Machine Language is detailed here.

A few points I want to expose you to are the general kinds of things being done.

- moving objects (numbers) into the computer's registers - very fast temporary storage,

- decrementing the value in a register,

- comparing the contents of a register to some value in memory, and

- transfering control to an instruction that's not in the standard sequential order - down the page.

But there is a problem with assembler language - it is unique for every computer architecture. Although most deskside and notebook computers these days use the Intel architecture, this is only recently the case. And... a variety of computer architectures are commonly used in game systems, smart phones, tablets, automobiles, appliances, etc...

Ok, we are almost at a point where I can show you machine language, the *native* language of a computer. But for you to understand it, I'm going to have to explain how everything is represented in a computer.

Inside Computers - Bits and Pieces

Your computer successfully creates the illusion that it contains photographs, letters, songs, and movies. All it really contains is bits, lots of them, patterned in ways you can't see. Your computer was designed to store just bits - all the files and folders and different kinds of data are illusions created by computer programmers. (Hal Abelson, Ken Ledeen, Harry Lewis, in "Blown to Bits")

Basically, computer instructions perform operations on groups of bits. A bit is either on or off, like a lightbulb. Figure 1.1_a shows an open switch and a lightbulb that is off - just like a transistor in a computer represents a bit with the value: zero. Figure 1.1_b shows the switch in the closed position and the lightbulb is on, again just like a transistor in a computer representing a bit with the value: one.

A microprocessor, which is the heart of a computer, is very primitive but very fast. It takes groups of bits and moves around their contents, adds pairs of groups of bits together, subtracts one group of bits from another, compares a pair of groups, etc... - that sort of stuff.

Inside a microprocessor, at a very low level, everything is simply a bunch of switches, also known as bits - things that are either on or off! Time to expand on how this is done; first let's explore how groups of bits can be used to form numbers.

Numeric Representation With Bits

There are only 10 different kinds of people in the world: those who know binary and those who don't. - Anonymous

Computers are full of zillions of bits that are either on or off. The way we talk about the value of a bit in the electical engineering and computer science communities is first as a logical value (true if on, false if off) and secondly as a binary number (1 if the bit is on and 0 if it's off). Most bits in a computer are manipulated in groups, so we humans need a way to describe groups of bits, things/objects a computer manipulates. Today, bits are most often grouped in quantities of 8, 16, 32, and 64.

Think about how you write down sequential numbers starting with zero: 0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, etc... Our decimal number system has ten symbols. In this sequential series, when we ran out of symbols, we combined them. You learned how to do this so long ago, in grade school, that today you just naturally think in terms of single digit numbers, then tens, hundreds, thousands, etc... The decimal number 1234 is one thousand, two hundreds, three tens, and four units.

So, how does the binary number system used inside computers work?

Well, with only two symbols, we would write the same sequential numbers as above: 0, 1, 10, 11, 100, 101, 110, 111, 1000, 1001, 1010, 1011. The decimal number 1234 in binary is 10011010010.

Since even reasonable numbers that we use all the time make for very long binary numbers, the bits are grouped in 3s and 4s which are simple to convert into numbers in the octal and hexadecimal number systems. For octal, we group three bits together. Take the binary equivilent of decimal 1234, 10011010010, and put spaces in between each group of three bits - starting at the right and going left.

10011010010 = 10 011 010 010

Now use the symbols 0, 1, 2, 3, 4, 5, 6, and 7 (eight symbols, so OCTAL) to replace each group.

10 011 010 010 = 2 3 2 2 = 2322

The octal representations of the binary patterns are certainly easier to read, write, and remember than the binary counterparts. An even more compact representation can be achieved by grouping the bits in chunks of four and converting these to hexNumerals.

When you group four bits together and use sixteen symbols (0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 0, A, B, C, D, E, and F) as their abbreviations, you have a hexadecimal representation.

10011010010 = 100 1101 0010 = 4D2

As you continue to explore how computers work, you'll hear more about numbers expressed in octal and hex; these are just more manageable representations of binary information - the digital world.

Table 1.2 compares the decimal, binary, octal, and hexadecimal number systems.

All rights reserved to http://www.bfoit.org/itp/Programming.html after the test above an image comes, we didn't download the image so visit the link above for more details...So keep reading

So, if the most common groupings of bits in a computer are 8, 16, 32, and 64, what kinds of numbers can these groups represent?

A group of eight bits has binary values 00000000 through 11111111, or expressed in decimal 0 through 255. A group of sixteen bits has binary values 0000000000000000 through 1111111111111111, or decimal 0 through 65535. I'm not going to type in binary representations for groups of 32 and 64 bits. The range of decimal values for a group of 32 bits is 0 through 4,294,967,295. The range of decimal values for a group of 64 bits is 0 through 18,446,744,073,709,551,615 - or almost eighteen and a half quintillion.

But wait... these numbers are all positive (Whole Numbers). If we are going to allow for subtraction operations on numbers, which can result in negative numbers, we need Integers. Modern computers use one bit in each of the groups to represent the sign (positive or negative) when the groups are used to represent integers. Table 1.3 shows the range of numbers that can be represented with groups of 8, 16, 32, and 64 bits.

That's about as deep as I want to get into the representation of numbers in computers and the binary, octal and hexadecimal number systems. Yes, computers have division operators but I am not going to cover numbers that include fractional parts, i.e., the "rational" and "irrational" numbers due to the complexity of their implementations. If you want to read more, I googled and found what looks like a good place for you to read more. Start at All About Circuits - Systems of numeration and read through it and continue on for a few more web pages in the series.

Symbols as Bits - ASCII Characters

Ok, so numbers are simply groups of bits. What other objects will the computer's instructions manipulate? How about the symbols that make up an alphabet?

It should come as no surprise that symbols that make up alphabets are just numbers, groups of bits, too. But how do we know which numbers are used to represent which symbols, or characters as I'm going to call them from this point on?

It's all about standards. In these lessons, we will use the American Standard Code for Information Interchange (ASCII) standard. It is so ubiquious that it even has its own web page, www.asciitable.com.

Let's walk through a couple of examples, entries in the table. Here are some characters, their decimal value and their binary value which is then transformed into an octal number.

Uppercase 'A' = decimal 65 = binary 01000001 = 01 000 001 = octal 101 Uppercase 'Z' = decimal 90 = binary 01011010 = 01 011 010 = octal 132 The digit '1' = decimal 49 = binary 00110001 = 00 110 001 = octal 061

This is a basic knowledge about programming, ALL RIGHTS RESERVED TO http://www.bfoit.org/itp/Programming.html STUDY HARD, anything else CHAT WITH US ON SKYPE nerd.help1 BUT FIRST YOU HAVE TO LIKE OUR FACEBOOK PAGE NERD HELP....

Inside a microprocessor, at a very low level, everything is simply a bunch of switches, also known as bits - things that are either on or off! Time to expand on how this is done; first let's explore how groups of bits can be used to form numbers.

Numeric Representation With Bits

There are only 10 different kinds of people in the world: those who know binary and those who don't. - Anonymous

Computers are full of zillions of bits that are either on or off. The way we talk about the value of a bit in the electical engineering and computer science communities is first as a logical value (true if on, false if off) and secondly as a binary number (1 if the bit is on and 0 if it's off). Most bits in a computer are manipulated in groups, so we humans need a way to describe groups of bits, things/objects a computer manipulates. Today, bits are most often grouped in quantities of 8, 16, 32, and 64.

Think about how you write down sequential numbers starting with zero: 0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, etc... Our decimal number system has ten symbols. In this sequential series, when we ran out of symbols, we combined them. You learned how to do this so long ago, in grade school, that today you just naturally think in terms of single digit numbers, then tens, hundreds, thousands, etc... The decimal number 1234 is one thousand, two hundreds, three tens, and four units.

So, how does the binary number system used inside computers work?

Well, with only two symbols, we would write the same sequential numbers as above: 0, 1, 10, 11, 100, 101, 110, 111, 1000, 1001, 1010, 1011. The decimal number 1234 in binary is 10011010010.

Since even reasonable numbers that we use all the time make for very long binary numbers, the bits are grouped in 3s and 4s which are simple to convert into numbers in the octal and hexadecimal number systems. For octal, we group three bits together. Take the binary equivilent of decimal 1234, 10011010010, and put spaces in between each group of three bits - starting at the right and going left.

10011010010 = 10 011 010 010

Now use the symbols 0, 1, 2, 3, 4, 5, 6, and 7 (eight symbols, so OCTAL) to replace each group.

10 011 010 010 = 2 3 2 2 = 2322

The octal representations of the binary patterns are certainly easier to read, write, and remember than the binary counterparts. An even more compact representation can be achieved by grouping the bits in chunks of four and converting these to hexNumerals.

When you group four bits together and use sixteen symbols (0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 0, A, B, C, D, E, and F) as their abbreviations, you have a hexadecimal representation.

10011010010 = 100 1101 0010 = 4D2

As you continue to explore how computers work, you'll hear more about numbers expressed in octal and hex; these are just more manageable representations of binary information - the digital world.

Table 1.2 compares the decimal, binary, octal, and hexadecimal number systems.

All rights reserved to http://www.bfoit.org/itp/Programming.html after the test above an image comes, we didn't download the image so visit the link above for more details...So keep reading

So, if the most common groupings of bits in a computer are 8, 16, 32, and 64, what kinds of numbers can these groups represent?

A group of eight bits has binary values 00000000 through 11111111, or expressed in decimal 0 through 255. A group of sixteen bits has binary values 0000000000000000 through 1111111111111111, or decimal 0 through 65535. I'm not going to type in binary representations for groups of 32 and 64 bits. The range of decimal values for a group of 32 bits is 0 through 4,294,967,295. The range of decimal values for a group of 64 bits is 0 through 18,446,744,073,709,551,615 - or almost eighteen and a half quintillion.

But wait... these numbers are all positive (Whole Numbers). If we are going to allow for subtraction operations on numbers, which can result in negative numbers, we need Integers. Modern computers use one bit in each of the groups to represent the sign (positive or negative) when the groups are used to represent integers. Table 1.3 shows the range of numbers that can be represented with groups of 8, 16, 32, and 64 bits.

That's about as deep as I want to get into the representation of numbers in computers and the binary, octal and hexadecimal number systems. Yes, computers have division operators but I am not going to cover numbers that include fractional parts, i.e., the "rational" and "irrational" numbers due to the complexity of their implementations. If you want to read more, I googled and found what looks like a good place for you to read more. Start at All About Circuits - Systems of numeration and read through it and continue on for a few more web pages in the series.

Symbols as Bits - ASCII Characters

Ok, so numbers are simply groups of bits. What other objects will the computer's instructions manipulate? How about the symbols that make up an alphabet?

It should come as no surprise that symbols that make up alphabets are just numbers, groups of bits, too. But how do we know which numbers are used to represent which symbols, or characters as I'm going to call them from this point on?

It's all about standards. In these lessons, we will use the American Standard Code for Information Interchange (ASCII) standard. It is so ubiquious that it even has its own web page, www.asciitable.com.

Let's walk through a couple of examples, entries in the table. Here are some characters, their decimal value and their binary value which is then transformed into an octal number.

Uppercase 'A' = decimal 65 = binary 01000001 = 01 000 001 = octal 101 Uppercase 'Z' = decimal 90 = binary 01011010 = 01 011 010 = octal 132 The digit '1' = decimal 49 = binary 00110001 = 00 110 001 = octal 061

This is a basic knowledge about programming, ALL RIGHTS RESERVED TO http://www.bfoit.org/itp/Programming.html STUDY HARD, anything else CHAT WITH US ON SKYPE nerd.help1 BUT FIRST YOU HAVE TO LIKE OUR FACEBOOK PAGE NERD HELP....